Impact-Site-Verification: 0e085bc1-d991-483d-bc98-417769f8b793

Artificial intelligence is no longer a future conversation for nonprofits. It is already here. Teams are using it to draft grant proposals, analyze donor data, write social media posts, summarize reports, and even support beneficiary communication. The tools are accessible, affordable, and increasingly powerful.

But access alone is not enough.

For mission driven organizations, the real question is not “Can we use AI?” It is “How do we use AI responsibly?”

Nonprofits operate on trust. Donors trust you with their money. Beneficiaries trust you with their stories. Staff trust you with their work and in many cases their personal data. Introducing AI into that environment without clear boundaries can quietly create ethical, legal, and reputational risks.

Responsible AI use is not about fear. It is about clarity. What follows is a practical framework nonprofits can adopt to use AI wisely while protecting their mission.

1. Start With Purpose, Not Hype

AI should serve your mission, not distract from it. Before adopting any tool, define the problem you are trying to solve. Are you trying to reduce administrative workload? Improve reporting accuracy? Strengthen donor engagement? Expand research capacity?

When adoption is driven by curiosity or pressure to keep up, implementation often becomes messy. When adoption is tied to a clear operational goal, results are more measurable and sustainable.

Responsible AI begins with intentional use cases.

2. Protect Data as a Non Negotiable

Nonprofits often handle sensitive information such as donor details, financial records, case notes, and personal beneficiary stories. Feeding this information into public AI tools without understanding data policies can create serious risks.

Before using any AI platform, review its data handling practices. Ask clear questions. Is the data stored? Is it used to train future models? Can it be deleted? Who has access to it?

Organizations like OpenAI and Microsoft provide documentation on enterprise data handling, but free or public versions of tools may not offer the same protections.

As a simple rule, never input confidential beneficiary or donor information into a system unless you fully understand how that data is processed and stored.

Trust is your greatest asset. Protect it.

3. Keep Humans in the Loop

AI can generate content quickly, but it does not understand context the way your team does. It can draft a grant proposal, but it cannot feel the urgency of your community. It can analyze trends, but it cannot interpret nuance without guidance.

Every AI generated output should be reviewed by a human before publication or submission. This is especially important for financial analysis, public communication, legal language, and programmatic decisions.

AI can assist. It should not decide.

Responsible nonprofits treat AI as a co pilot, not an autopilot.

4. Be Transparent When It Matters

Transparency builds credibility. If AI is used in a way that significantly shapes donor communication, beneficiary interaction, or research outputs, consider whether disclosure is appropriate.

This does not mean you must label every social media caption. It means you should not present AI generated insights as purely human research if they were not. It also means avoiding the temptation to fabricate data or testimonials through automated systems.

Your reputation is worth more than efficiency gains.

5. Audit for Bias and Fairness

AI systems are trained on large datasets that may contain biases. This can influence outputs in subtle ways. For nonprofits working in sensitive areas such as education, health, gender equity, or economic inclusion, bias can cause harm.

Regularly review AI generated recommendations and content for unintended bias. Question assumptions. Cross check outputs with lived experience and local context.

Responsible use requires awareness that AI reflects the data it was trained on, not necessarily the communities you serve.

6. Build an Internal AI Policy

Even small nonprofits benefit from a simple written guideline. An AI policy does not need to be complicated. It can include:

• Approved tools

• Data that must never be entered into AI systems

• Review and approval processes

• Expectations around transparency

• Accountability for misuse

Clear guidance reduces confusion and protects staff. It also signals maturity to partners and funders who increasingly care about digital governance.

7. Invest in Training, Not Just Tools

Buying access to AI tools without equipping your team to use them responsibly creates risk. Staff should understand not only how to prompt effectively but also how to evaluate outputs critically.

Training should include data privacy awareness, ethical considerations, and practical limitations. AI can sound confident even when it is wrong. Teaching your team to question and verify is essential.

Responsible use is a skill, not a feature.

8. Measure Impact, Not Activity

It is easy to celebrate productivity gains. Faster reports. More content. Quicker research summaries.

But the real question is whether AI is improving outcomes. Is it strengthening donor retention? Reducing staff burnout? Improving service delivery? Increasing funding success rates?

If AI increases output but lowers quality or trust, it is not a net positive. Responsible adoption focuses on measurable mission impact.

A Simple Responsible AI Checklist

Before implementing AI in any workflow, ask:

• Does this align with our mission and values?

• Are we protecting sensitive data?

• Is a human reviewing the output?

• Are we transparent where necessary?

• Have we considered potential bias?

• Do we have a clear policy guiding use?

If you cannot confidently answer yes to these questions, pause before proceeding.

The Bigger Picture

AI has the potential to reduce administrative burdens, unlock insights from complex data, and expand nonprofit capacity in ways that were previously impossible. For resource constrained organizations, this can be transformative.

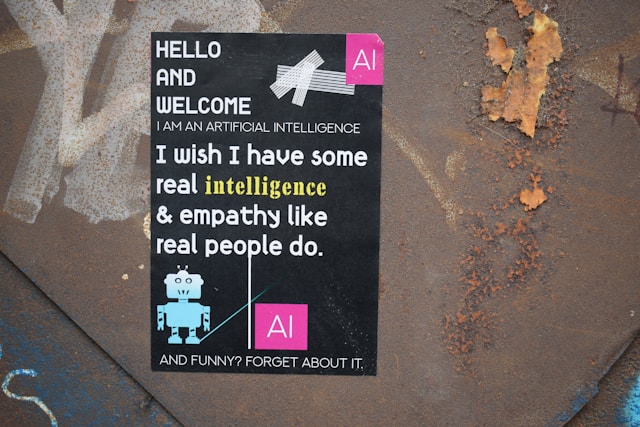

But technology does not replace judgment. It does not replace empathy. It does not replace accountability.

Responsible AI use in nonprofits is not about avoiding innovation. It is about stewarding it wisely. When approached with clarity and care, AI can strengthen your systems without compromising your values.

In the nonprofit world, impact matters. So does integrity. The organizations that thrive will be those that hold both together.